News Search Ranking

- News Search Ranking: Overview

- News Search Ranking: Facts and Information

- News Search Ranking: Tutorial and Course

- News Search Ranking: References

Tutorial and Course for News Search Ranking by SEO University, including facts and information about News Search Ranking.

News Search Ranking: Overview

Tutorial and Course for News Search Ranking is the ultimate SEO tutorial and course created by SEO University to help you to learn and understand news search ranking and other related SEO technologies.

Tutorial and Course for News Search Ranking describes a few algorithms for news search engines, including ranking algorithms and clustering algorithms for news search engines. For ranking problems, although we can follow the learning-to-rank framework, it is important to consider the freshness factor that plays a critical role in user experience. We proposed a few unique aspects for this task, such as data collection, data sampling, editorial judging, and evaluations metrics. We also deep-dived into a real user search log to get a better understanding of user behavior and better modeling. For clustering problems, we presented a system that involves clustering the entire news corpus with an offline clustering algorithm and handling the incoming streaming data with incremental clustering algorithm. The output from the offline and incremental clustering algorithms is then utilized for improving the real-time query-based clustering.

News Search Ranking: Facts and Information

News search is one of the most important Internet user activities. For a commercial news search engine, it is critical to provide users with the most relevant and fresh ranking results. Furthermore, it is necessary to group the related news articles so that users can browse search results in terms of news stories rather than individual news articles. For the ranking problem, the main challenge is achieving appropriate balance between topical relevance and freshness. For the clustering problem, the main challenge is in grouping related news articles into clusters in a scalable mode.

News Search Ranking: Tutorial and Course

News Search Ranking: The Learning-to-Rank Approach

The main challenge for ranking in news search is how to make appropriate balance between two factors: Relevance and freshness. Here relevance includes both topical relevance as well as news source authority.

A widely adopted approach in practice is to use a formula to combine relevance and freshness.

Many prior works have exploited the temporal dimension in searches. For example, Baeza-Yates et al. studied the relation among Web dynamics, structure, and page quality and demonstrated that PageRank is biased against new pages. In T-Rank Light and T-Rank algorithms, both activity (i.e., update rates) and freshness (i.e., timestamps of most recent updates) of pages and links are taken into account in link analysis. Cho et al. proposed a page quality ranking function in order to alleviate the problem of popularity-based ranking, and they used the derivatives of PageRank to forecast future PageRank values for new pages. Nunes proposed to improve Web information retrieval in the temporal dimension by combining the temporal features extracted from both individual documents and the whole Web. Pandey et al. studied the tradeoff between new page exploration and high-quality page exploitation, which is based on a ranking method to randomly promote some new pages so that they can accumulate links quickly.

Temporal dimension is also considered in other information retrieval applications. Del Corso et al. proposed the ranking framework to model news article generation, topic clustering, and story evolution over time, and this ranking algorithm takes publication time and linkage time into consideration as well as news source authority. Li et al. proposed a TS-Rank algorithm, which considers page freshness in the stationary probability distribution of Markov chains, since the dynamics of Web pages are also important for ranking. This method proves effective in the application of publication search. Pasca used temporal expressions to improve question-answering results for time-related questions. Answers are obtained by aggregating matching pieces of information and the temporal expressions they contain. Furthermore, Arikan et al. incorporated temporal expressions into a language model and demonstrated experimental improvement in retrieval effectiveness.

Recency query classification plays an important role in recency ranking. Diaz determined the newsworthiness of a query by predicting the probability of a user clicking on the news display of a query. König et al. estimated the clickthrough rate for dedicated news search results with a supervised model, which is to satisfy the requirement of adapting quickly to emerging news event.

Learning-to-rank algorithms have shown significant and consistent success in various applications. Such machine-learned ranking algorithms learn a ranking mechanism by optimizing particular loss functions based on editorial annotations. An important assumption in those learning methods is that document relevance for a given query is generally stationary over time, so that, as long as the coverage of the labeled data is broad enough, the learned ranking functions would generalize well to future unseen data. Such an assumption is often true in Web searches, but it is less likely to hold in news searches because of the dynamic nature of news events and the lack of timely annotations.

A typical procedure is as follows:

- Collect query-URL pairs.

- Ask editors to label the query-URL pairs with relevance grades.

- Apply a learning-to-rank algorithm to the train ranking model.

Traditionally, in learning-to-rank, editors label query-URL pairs with relevance grades, which usually have four or five values, including perfect, excellent, good, fair, or bad. Editorial labeling information is used for ranking model training and ranking model evaluation. For training, these relevance grades are directly mapped to numeric values as learning targets.

For evaluation, we desire an evaluation metric that supports graded judgments and penalizes errors near the beginning of the ranked list. We extend the learning-to-rank algorithm in news searches, for which we mainly make two modifications due to the dynamic nature of the news search:

- Training Sample Collection

- Editorial Labeling Guideline

Training Sample Collection

The training sample collection has to be near real time for news searches by the following steps:

- 1. Sample the latest queries from the news search query log.

- 2. Immediately get the candidate URLs for the sampled queries.

- 3. Immediately ask editors to do judgments on the query-URL pairs with relevance and freshness grades.

We can see that all the steps need to be accomplished in a short period. Therefore, the training sample collection has to be well planned in advance; otherwise, any delay during this procedure would affect the reliability of the collected data. If queries are sampled from an outdated query log or if all of the selected candidate URLs are outdated, they cannot represent the real data distribution. If editors do not label query-URL pairs on time, it will be difficult for them to provide accurate judgments, because editors’ judgments rely on their good understanding of the related news events, which becomes more difficult as time elapses.

Editorial Labeling

In a news search, editors should provide query-URL grades on both traditional relevance and freshness. Although document age is usually available in news searches, it is impossible to determine a document’s freshness based solely on document age. A news article published one day ago could either be very fresh or very old, depending on the nature of the related news event. So, we ask editors to provide subjective judgments on document freshness, which can have a few different grades, such as the following:

- Very Fresh: latest documents (promote grade: 1)

- Fresh (promote grade: 0)

- A little bit outdated (demote grade: -1)

- Totally outdated and useless (demote grade: -2)

For ranking model training, we combine relevance grade and recency grade for a new grade as learning target. An example of such a grade combination is shown in the list. If the document is very fresh, we promote one grade from its original relevance grade. For example, if a document has a good grade on relevance and a fresh grade on freshness, its final grade is excellent, which is one grade higher than it is relevance grade, good. If the document is fresh, we neither promote nor demote. If the document is a little bit outdated, we demote one grade from its original relevance grade. If the document is totally outdated and useless, we demote two grades.

For ranking model evaluation, we can compute DCG values based on either the combined grades or the relevance grade and freshness grade separately.

To evaluate freshness in isolation, we also include a freshness metric based on DCG, called DCF:

A query may have multiple very fresh documents - for example, when multiple news sources simultaneously publish updates to some ongoing news story. Note that DCF is a recency measurement that is independent of overall relevance. Therefore, when we evaluate a ranking, we should first consider demoted NDCG, which represents the overall relevance, then inspect the value of the DCF. We also define normalized discounted cumulative freshness (NDCF) in the same way as for NDCG.

News Search Ranking: Joint Learning Approach from Clickthroughs

Given the highly dynamic nature of news events and the sheer scale of news reported around the globe, it is often impractical for human editors to constantly keep track of the news and provide timely relevance and freshness judgments.

From our testing, we observe that the clicks are not strictly correlated with the demoted grades; the average Pearson correlation between them across the queries is 0.5764 with a standard deviation 0.6401. The main reason for this inconsistency is the hard demotion rule: Users might have different demotion preferences for different queries, and it's almost impossible for an editor to predefine the combination rules, given the plurality of possibilities. As a result, the uncertainty from this heuristically derived ranking grade will limit the performance of subsequent learning-to-rank algorithms.

Suppose that, when a user submits a query to a news search engine and gets a list of ranked news documents, he would first judge the usefulness of each document by his underlying sense of relevance and freshness and give it an overall impression grade based on his preference for relevance and freshness at that particular time. Once he has such impressions in mind, he would deliberately click the documents most interesting to his and skip all the others.

Inspired by testing results, we proposed to model the users' click behavior in news searches as a direct consequence of examining the relevance and freshness aspects of the returned documents. Besides, for different queries, users’ relative emphasis on these two aspects can vary substantially, reflecting the searching intention for the specific news event. Therefore, a good ranking function should be able to infer such a tradeoff and return the optimally combined ranking results for individual queries. However, we cannot explicitly obtain users’ relevance/freshness judgments and the preferences for these two aspects, since their evaluation process is not directly observable from the search engine. Fortunately, users' click patterns are recorded, from which we can assume that the clicked documents are more meaningful to them than the unclicked ones. Therefore, we model relevance and freshness as two latent factors and assume that a linear combination of these two, which is also latent, generates the observed click preferences.

To better determine the temporal property of the news documents and detect the recency preference imposed for the query, we will use a set of novel temporal features from click statistics and content-analysis techniques.

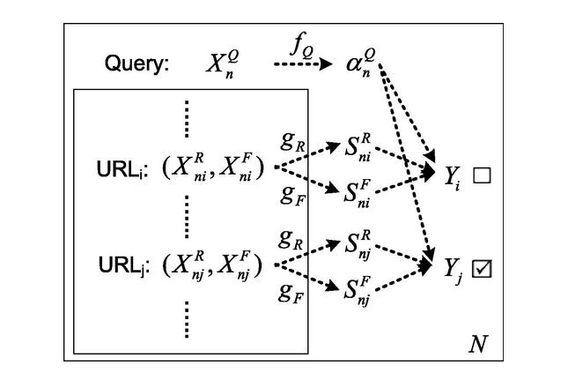

Intuitive illustration of the proposed Joint Relevance Freshness Learning (JRFL) model. The user-issued query and the corresponding returned URLs are represented by their features on the left part. Dashed-arrow lines in the middle indicate the assumed user’s judging process before he clicks. The check boxes on the right record the clicked URLs.

The basic assumption of our proposed model is that a user's overall impression assessment by combining relevance and freshness for the clicked URLs should be higher than the unclicked ones, and such a combination is specific to the issued query. Therefore, our method falls into the pairwise learning-to-rank framework.

A classic pairwise learning-to-rank algorithm uses only one scoring function to account for all the observed click preferences, where different ranking criteria cannot be easily incorporated. Besides, even though the RankSVM model shares a similar object function as JRFL, they are still quite different: JRFL simultaneously learns a relevance model and a freshness model and utilizes a query-specific model to leverage these two aspects to explain the observed click patterns. Neither RankSVM nor GBRank deal with such query-specific multicriteria ranking. In our problem, all those factors are latent and estimated automatically from the click logs.

In this work, we proposed a joint learning framework, Joint Relevance Freshness Learning (JRFL), for modeling the topical relevance and freshness, and the query-specific relative preference between these two aspects based on the clickthroughs for the news search task. Experiments on large-scale query logs and editorial annotations validate the effectiveness of the proposed learning method. We only instantiated the proposed joint learning framework by linear models, but many alternatives exist. It would be meaningful to employ other types of nonlinear functions to enhance JRFL. For example, using logistic functions can naturally avoid the range constraints over query weights in optimization. Besides, in our current setting, the preference between relevance and freshness is assumed to be only query-dependent. It would be interesting to extend this to user-dependent, i.e., personalized, search. By defining a proper set of user-related features, or profiles, the proposed JRFL can be easily applied in such user-centric retrieval environments. What is more, the proposed model can also be flexibly extended to other retrieval scenarios, where usefulness judgment is beyond pure topical relevance, such as opinions in blog searches and distance in location searches.

News Search Ranking: News Clustering

Clustering of search results provides a unified view of the search results by grouping together similar documents. This allows the user to examine all the related categories of the search results without having to go through hundreds of items. Clustering becomes more important in the context of a domain such as news because there could be thousands of related articles for a given query. Most of these news articles are related, however, and if we group the articles in terms of related stories, then the number quickly reduces to a handful. This makes it easier for the user to browse the search results in terms of news stories rather than individual news articles. Furthermore, news sites re-publish articles from different sources to provide comprehensive coverage of a particular topic. This further exacerbates the problem by increasing the redundancy in the search results. Instead of leaving the search result organization to the user, a clustered representation of search results provides an overview to explore a topic.

The news search also has another component of recency, which introduces additional complexities. Although two articles are very related in terms of document content, if they are far apart in the time axis, they might correspond to a repetitive event, such as California earthquake. Even though such events occur multiple times, they correspond to different clusters because they are totally independent events and the user may be interested in looking at them individually.

Most of the work on search result clustering focused more on salient feature extraction and utilizing this information to cluster the search results. However, the salient features do not provide the complete picture about the document. Sometimes the most important information about the news article is buried deep in the document body. Later we provide experimental evidence that shows that the body of the document provides a great boost in the performance of the clustering system. Other approaches utilize standard clustering algorithms such as a hierarchical agglomerative clustering (HAC) algorithm and partitional algorithms such as K-means. In the work of Zamir O, Etzioni O., the clustering problem is modeled as phrase extraction from documents and then uses a suffix tree-based clustering algorithm to group documents that contain common phrases. However, we show that the query information is vital in clustering the search results, and we also show how to utilize this information in the clustering algorithm.

Other research related to clustering the search results focuses on utilizing various matrix factorization techniques such as singular value decomposition, nonnegative matrix factorization, local nonnegative matrix factorization, and concept decomposition. These clustering algorithms focus on extracting quality descriptions for the clusters by finding the labels with matrix factorization on the word features from snippets of search results. Although the descriptions may be important in Web search results clustering, they do not play a vital role in news search results, since the most representative document can be used to show the summary of the cluster. Another algorithm called DisCover clusters search results by maximizing the coverage and distinctiveness of the clusters.

Another line of work is related to using named entities to browse the clusters of search results. However, this work mainly focuses on extracting the named entities from search results, organizing them into clusters using the named entities. Other related work identifies discriminative, ambiguous, and common terms by constructing a relational graph of terms and using them to cluster Web search results.

It is clear that the named entities that occur in the body of the documents are valuable for producing quality clusters of search results. However, it is expensive to process the entire documents to cluster the search results in real time as it impacts the user experience. In this work we propose a scalable clustering technique that can provide a fast execution time without sacrificing accuracy by utilizing the body features in terms of offline clusters that provide a prior of the document relatedness of search results. The offline clusters are obtained by a process that is run offline. We also handle incremental clustering for documents that arrive continuously.

In fact, the offline clusters that are obtained by clustering the entire corpus provide a useful representation, and the search results can be organized into clusters by using this information alone. Some of the existing work exactly follows these methodologies. However, such organization would have a fixed granularity of clustering, which may not be desirable for all queries. Some queries can be broad and require a higher level of clustering hierarchy; other queries can be very specific and require a fine level of clustering hierarchy. If the offline clusters alone are used to organize the search results, the granularity of the clustering cannot be adjusted according to query. However, the solution we provide overcomes this problem by applying a clustering algorithm on the search results in real time by utilizing the offline clusters as features.

The contributions of our work are two fold:

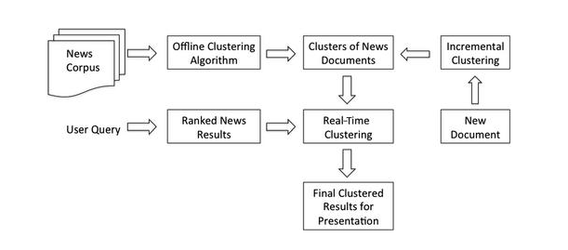

- We provide a unified framework for scalable online clustering and detail the architecture of the system that describes each of the three components: offline clustering, incremental clustering, and real-time clustering.

- We propose novel techniques to cluster search results in real time by incorporating the query information in the clustering algorithm itself.

To cluster the search results in real time, we need just the top-ranked search results. However, the user would like to browse all the related articles in relation to a particular news story, which might not be covered in the top-ranked news results. To address this issue, we rely on offline clustering that clusters the entire news corpus. We utilize the offline clusters as an additional source of information in real-time clustering, which helps its performance as well. However, this offline batch processing of documents, especially news articles, does not work efficiently, because news articles arrive continuously every second. To address this issue, we also incorporate incremental clustering solutions to address the streaming data clustering problem. The following Figure shows the architecture of our system that describes individual components that address these needs.

The architecture of the news search result clustering.